CVSS Scores have been in wide use in vulnerability management programs for more than a decade. First released in 2005, CVSS scoring mechanisms have gone through three major revisions, and a number of minor revisions, since their inception. The most recent revision was the move from CVSSv2 to CVSSv3, with CVSSv3.1 being the current revision. CVSSv3, designed to correct shortcomings in v2, has been judged by the security community as a whole to have closed some, but not all, of the shortcomings of v2.

CVSS Major Versions

CVSS v1

The US National Infrastructure Advisory Council (NIAP) worked through 2003/2004 to come up with a framework that would provide a standard for severity ratings of vulnerabilities in software. The result, CVSSv1, was first released in 2005 and handed off to the Forum of Incident Response and Security Teams to maintain moving forward. CVSSv1 was widely viewed as having significant issues, and work began immediately on its successor, CVSSv2.

CVSS v2

CVSSv2 launched in 2007, and was widely adopted by vendors and enterprises as a common language by which to compare software vulnerabilities. Despite wide adoption, v2 also had significant issues to be addressed, so after 5 years of use, work began on CVSSv3.

CVSS v3

Work on CVSSv3 began in 2012, with the 3.0 revision being released in 2015. The most recent revision, CVSSv3.1, was released in mid-2019.

Shortcomings of CVSSv2

Critics of v2 believed that the scoring system required users to have too much detailed knowledge of the exact impact of a vulnerability to be useful from a practical standpoint. Additionally, there were complaints around several metrics not being sufficient to distinguish between different types of vulnerabilities. In 2013, Risk-based Security wrote an open letter to FIRST outlining the issues and shortcomings with CVSSv2.

Differences Between CVSSv2 and CVSSv3

Authors of CVSSv3 worked to introduce scoring changes that more accurately reflected the reality of vulnerabilities encountered in the wild. The three major metric groups – Base, Temporal, and Environmental each remained the same, but with changes within both the Base and the Environmental groups.

In the Base group, several changes were made:

- Confidentiality, Integrity, and Availability metrics were each changed to have scoring parameters of None, Low, or High.

- The Attack Vector metric added the Physical (P) value, which indicates a vulnerability where the adversary must have physical access to a system in order to exploit the vulnerability.

- A new metric, User Interaction (UI), was added. This metric indicates whether or not the cooperation of a legitimate user is needed to conduct an exploit.

- Another new metric, Privileges Required (PR) was added to indicate that administrative or other escalated privileges on the target machine must be achieved in order to successfully exploit the system.

Stop Sabotaging Your Cybersecurity

Avoid the 11 common vulnerability management pitfalls

In the Environmental group, the biggest change was that the environmental metrics in v2 were completely replaced with what’s known as a Modified Base Score. Essentially, each of the Base metrics may be modified by the organization to reflect differences between their situation and environment vs others.

CVSSv3 Scoring Scale vs CVSSv2

CVSSv2 qualitative scoring mapped the 0-10 score ranges to one of three severities:

- Low – 0.0 – 3.9

- Medium – 4.0 – 6.9

- High – 7.0 – 10.0

With CVSSv3, the same 0-10 scoring range is now mapped to five different qualitative severity ratings:

- None – 0.0

- Low – 0.1 – 3.9

- Medium – 4.0 – 6.9

- High 7.0 – 8.9

- Critical – 9.0 – 10.0

CVSSv3 Impact on Scoring

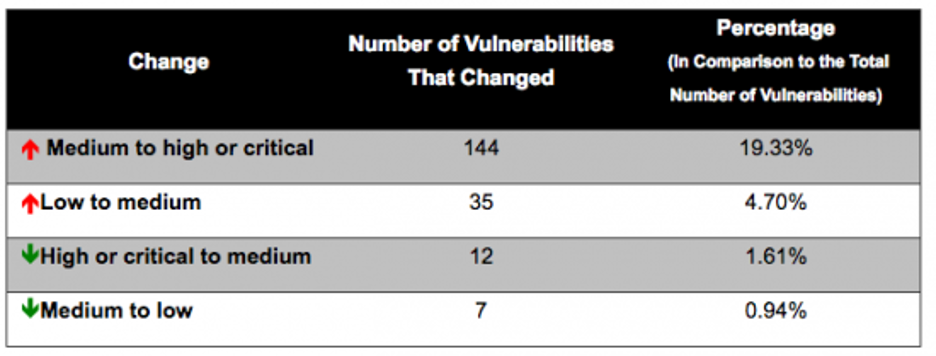

One widely shared criticism of CVSSv3 is that the change in scoring methodology increased the severity of too many vulnerabilities to High or to Critical. Cisco conducted a study on this topic and found that the average base score increased from 6.5 in CVSSv2 to 7.4 in CVSSv3. This means that the average vulnerability increased in qualitative severity from “Medium” to “High.” The same study concluded that far more vulnerabilities increased in severity than decreased.

Nearly 25% of vulnerabilities increased in severity vs less than 3% that decreased.

Conclusion

Despite numerous revisions and substantial progress over the years, CVSS still has shortcomings to be addressed. For example, CVSSv3 still has flaws in data confidentiality impact ratings. Despite these challenges, it has evolved into a useful tool and provides a common vocabulary by which vendors and enterprises alike can discuss the severity of vulnerabilities.

While CVSS scores can and should be an important part of your vulnerability management program, it is important to keep in mind that widely published CVSS scores for a vulnerability can be misleading, as these typically represents the Base score only. Organizations must take their environment into account when incorporating these scores into their infosec programs. Progressive infosec teams have determined that the most appropriate path forward is to factor these scores in as one component of several in a risk-based vulnerability management program.